Overview

Easily build and host your own Analytics platform!

Upon installing this Laravel Package, you can collect bussiness and application "Events" about your application and store them into ElasticSearch. This can be a "item_purchased", "page_viewed" or "button_clicked" events, anything actually!

Once you had collected these metrics, you would normally query that and build reports out of it. Yes you can do the same by just creating MySQL tables and insert those information there, but as your application and traffic grows large, you'll be facing these issues on such approach:

Inability to scale-out the write operations

As your traffic grows, the number of INSERT, UPDATE and DELETE operations also grows. MySQL slaves and redundancy only allows you to distribute the read queries into multiple servers but the write operations is still need to be done in the master - that is a single point of failure and could easily lead into scalability problems on your application. While there are ways to allow scaling the write operations in a traditional RDMBS, but it is VERY VERY expensive to setup, host and maintain.

Dataset is too huge to fit in a single machine

Normally, when the table is growing large, we just purge/truncate it. But what if you cant afford to delete data? Like for example, if you need to be able to drill-down and analyze different metrics for the last 5 years, you are in out of luck in querying all that data in MySQL. (good luck in performing sql join to a table with 10 million of rows!). If your database is lets say 200GB worth of data, MySQL needs to load that entire dataset into memory and perform the joins and lookup there -- that is not usually possible even if you got tons of slaves!

Response-time of the application is drastically affected as you collect more metrics

If you want to be really as much as detailed as possible to the point that you are even tracking the individual mouse-moves of the surfers as their mouse hovers across your site (a bit extreme!) You will realize that you can easily DDOS your own application and drastically slow it down because it needs to insert many things to your MySQL database to complete each request. Combine that with an enormously huge table and its for sure a perfect recipe to a disaster.

Introducing Evorg

Evorg is a Laravel package that allows you to collect and analyze business and analytics data and store them in a very scalable manner.

Features

- > Easy syntax and intuitive methods of data collection.

- > Can be easily scaled out in large deployments, even if you have terabytes data. This is because Evorg allows your to distribue both the write and read operations into multiple servers.

- > Self-hosted and thus cheaper solution than other open-source and commercial alternatives ( Google Analytics, Segment, Hadoop etc).

- > Schema-less JSON data, which means you can dynamically pass any fields.

- > NOSQL solution and allows you to flexibly re-shape your fields anytime

- > Asynchronously records the tracking data in background queue, so it doesnt affects the response time of your web application

- > Supports "sharding" of your data so that even if it grew so large (and cant fit into a single machine) the data will be split into multiple servers and query it as if its just a single database

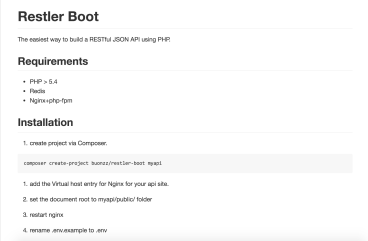

Installation

Evorg is a Laravel package and has a few requirements to run:

- PHP >= 5.5.9

- ElasticSearch Server

composer require buonzz/evorgcomposer update Buonzz\Evorg\ServiceProvider::class, 'Evorg' => Buonzz\Evorg\EvorgFacade::classphp artisan vendor:publish